Learn Spring Batch in simple step-by-step way. This series of Spring Batch tutorials is based on latest Spring Batch 3 version.

Spring Batch is a lightweight, Open Source batch processing framework designed to handle the day-to-day batch processing jobs involving bulk of data. Batch processing is execution of series of job where each job may involve multiple steps.

Build on Spring POJO based development approach, Spring Batch provides API and default implementations to read/write resources, process high volume of data, provide statistics, support transaction management, and advance optimization and data partitioning techniques to support parallel processing of batch jobs.

For Spring Boot, please refer to our Spring Boot tutorials.

For AngularJS, please refer to our AngularJS tutorials.

For Spring 4 MVC, please refer to our Spring 4 MVC tutorials.

For Spring 4, please refer to our Spring 4 MVC tutorials.

For Spring 4 Security, please refer to our Spring 4 Security tutorials.

For Hibernate, please refer to our Hibernate 4 tutorials.

Spring Batch Architecture

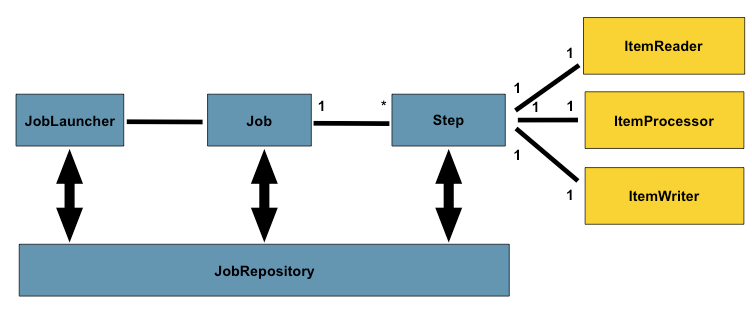

Below is the high level architecture of Spring Batch Processing

Source : Spring Batch Reference

A Job is composed of one to many Steps. Each Step can work in two modes :

- Chunk Oriented Processing or READ-PROCESS-WRITE mode: Step needs to read from a resource , process the data and then write back the process data to a resource. In this approach, Step has exactly one ItemReader(reader which reads from a resource, be it file, database, messaging queue etc.), ItemProcessor ( provides a hook to apply business logic) and ItemWriter (writer which writes to a resource, be it file, database, messaging queue etc).

-

TASKLET mode: Step has to perform a single operation (be it just sending email, executing a stored procedure, cleaning up files older than x days, etc). In this approach Step includes a Tasklet interface which have only one method execute which can perform above mentioned activities.

A job is launched via JobLauncher, and JobRepository stores the meta data about the currently running process.Note that Steps can be chained together.

Sample Configuration

<job id="sampleJob" job-repository="jobRepository">

<step id="step1" next="step2">

<tasklet transaction-manager="transactionManager">

<chunk reader="itemReader" writer="itemWriter" commit-interval="1"/>

</tasklet>

</step>

<step id="step2" next="step3">

<tasklet transaction-manager="transactionManager">

<chunk reader="itemReader" writer="itemWriter" commit-interval="1"/>

</tasklet>

</step>

<step id="step3">

<tasklet ref="SingleOperationTasklet"/>

</step>

</job>

Above configuration reveal that there are three steps in our sample job. Step1 is working in Chunk Oriented mode, that means it reads from a resource, process and then writes to resource. Step2 will be triggerd once Step1 is completed(via next). Step2 as well is running in Chunk Oriented more, and will trigger Step3 once completed. Now Step3 is working in Tasklet mode, that means it will perform a single operation (ref refers to a bean which implements Tasklet interface providing execute method implementation.

Spring Batch also provides possibility to add Listeners on job and step level. Listeners provide the opportunity to perform a business logic before or after the job and step. For example if you would like to create a directory or move exiting data to another directory before performing the actual file processing job, you can use job listener which will provide the hook to be called just before the actual job starts and also a hook at the completion of job(to perform some other business logic if you want to do so).

Listener on job level contains method beforeJob which will be called just before job starts, and afterJob which will be called just after job completed. Similarly, Listener on Step level contains methods beforeStep which will be called just before step starts, and afterStep which will be called just after step completed.

Below is a sample configuration with listener on Job level

<job id="sampleJob" job-repository="jobRepository">

<step id="step1">

<tasklet>

<chunk reader="itemReader" writer="itemWriter" commit-interval="1"/>

</tasklet>

</step>

<listeners>

<listener ref="jobListener"/>

</listeners>

</job>

Here jobListener is a user defined spring bean implementing JobExecutionListener.

Spring Batch Hands-on Examples

This Spring Batch Tutorial series is based on Spring Batch 3.0.1.RELEASE.

Spring Batch- Read a CSV file and write to an XML file (with listener)

Example showing usage of flatFileItemReader, StaxEventItemWriter, itemProcessor and JobExecutionListener.

Spring Batch- Read an XML file and write to a CSV file (with listener)

Example showing usage of StaxEventItemReader, flatFileItemWriter, itemProcessor and JobExecutionListener.

Spring Batch- Read an XML file and write to MySQL Database

Example showing usage of StaxEventItemReader, JdbcBatchItemWriter, itemProcessor and JobExecutionListener.

Spring Batch- Read From MySQL database & write to CSV file

Example showing usage of JdbcCursorItemReader, flatFileItemWriter, itemProcessor and JobExecutionListener.

Spring Batch- MultiResourceItemReader & HibernateItemWriter Example

Example showing usage of MultiResourceItemReader , HibernateItemWriter , itemProcessor and JobExecutionListener.

Spring Batch & Quartz Scheduler Example (Tasklet Usage)

Read files dynamically whose names can be different on each scheduled run. Example exhibits Quartz integration with Spring Batch, also showing usage of Tasklet.